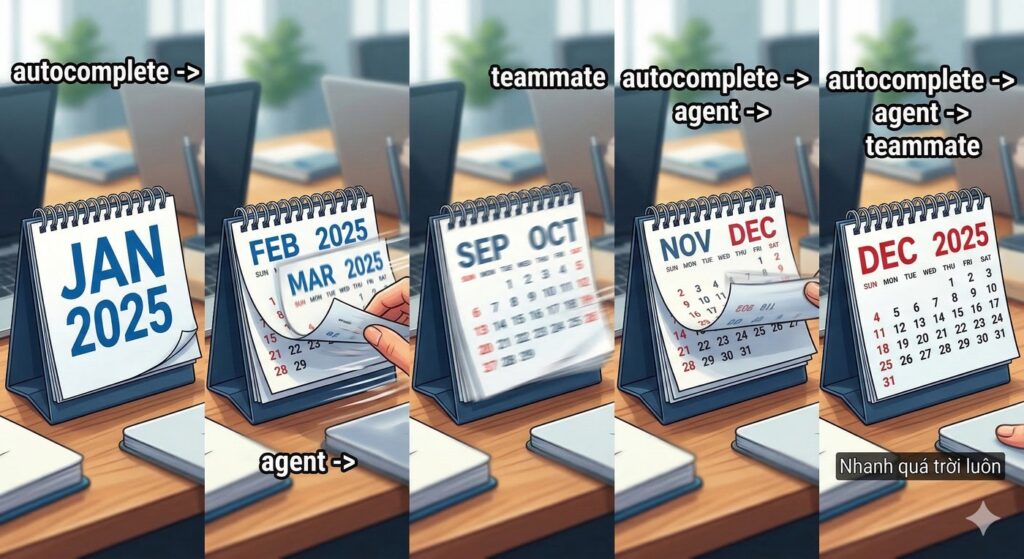

There was a weird moment around late February 2025 when a lot of us had the same reaction at the same time: “Wait… this is not autocomplete anymore, right?”

Not because models suddenly became perfect. They were not. They still are not. But they started behaving like junior teammates who could plan a bit, use tools, run commands, attempt tests, and report back with receipts. That shift was emotional and operational at once. You felt it in daily work, not in benchmark slides.

And yes, your memory about a key trigger is directionally right: Anthropic’s Claude 3.7 Sonnet and Claude Code preview landed on February 24, 2025 [R1]. That date matters because the industry conversation went from “prompting tricks” to “agentic coding workflows” almost overnight.

This article keeps the original thesis fully intact, but makes it more practical: timeline, why March 2025 felt messy, who gained what, where context engineering overtook prompt engineering, and why 2026 is less about one model and more about reliable human-agent operating systems.

TÓM TẮT NHANH (Quick Summary)

If you have 90 seconds, this is the core:

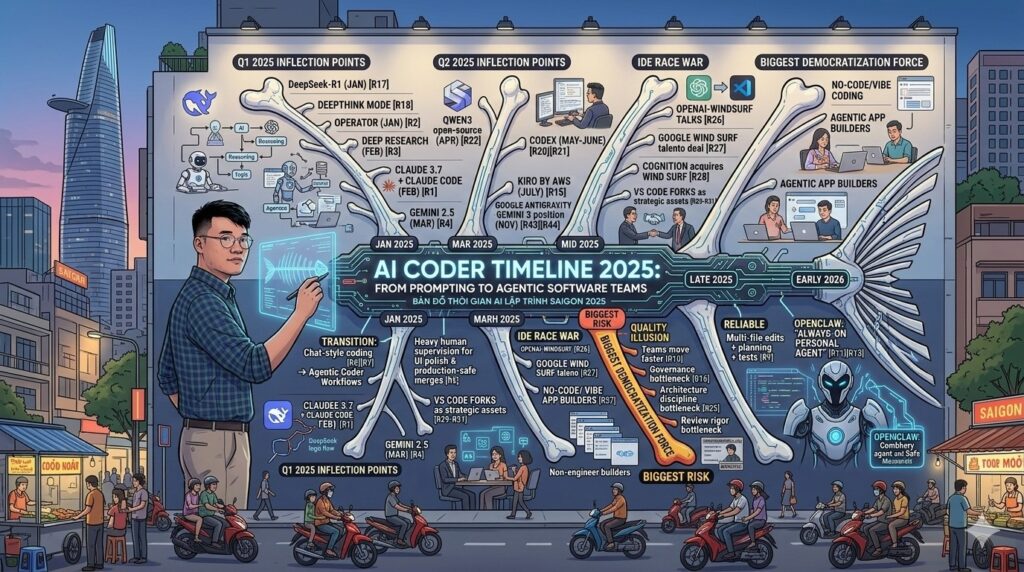

- Early 2025 was the transition from chat-style coding help to agentic coding: tools, terminal execution, multi-step plans, and evidence loops.

- Inflection points were concentrated: DeepSeek-R1 + DeepThink mode (Jan), Operator (Jan), deep research (Feb), Claude 3.7 + Claude Code (Feb), Gemini 2.5 (Mar), Qwen3 open-source reasoning family (Apr), Codex (May-June), Kiro by AWS (July), and Google Antigravity with Gemini 3 autonomous workflow positioning (Nov) [R1][R2][R3][R4][R15][R17][R18][R20][R21][R22][R43][R44].

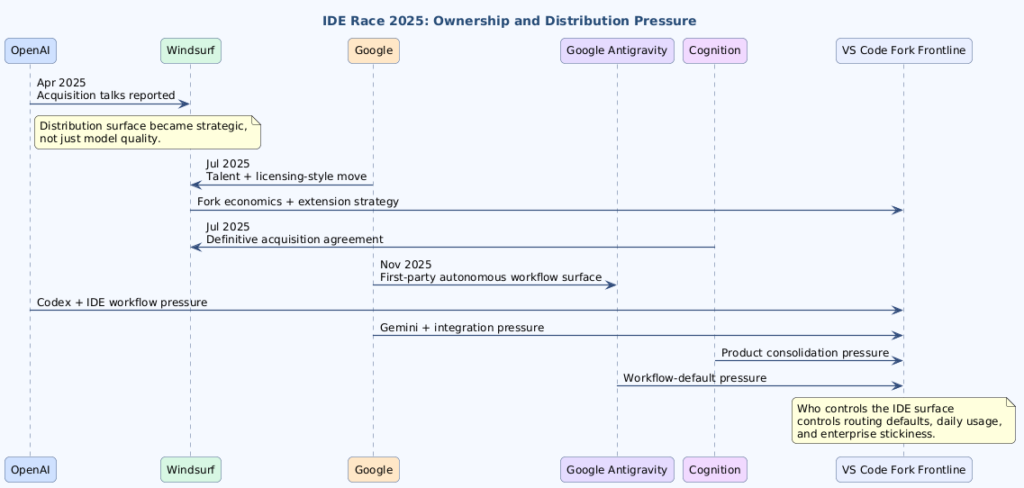

- Mid-2025 also became an IDE race war: OpenAI-Windsurf talks, Google’s Windsurf talent+licensing move, then Cognition acquiring Windsurf, while VS Code forks became strategic distribution assets [R26][R27][R28][R29][R30][R31].

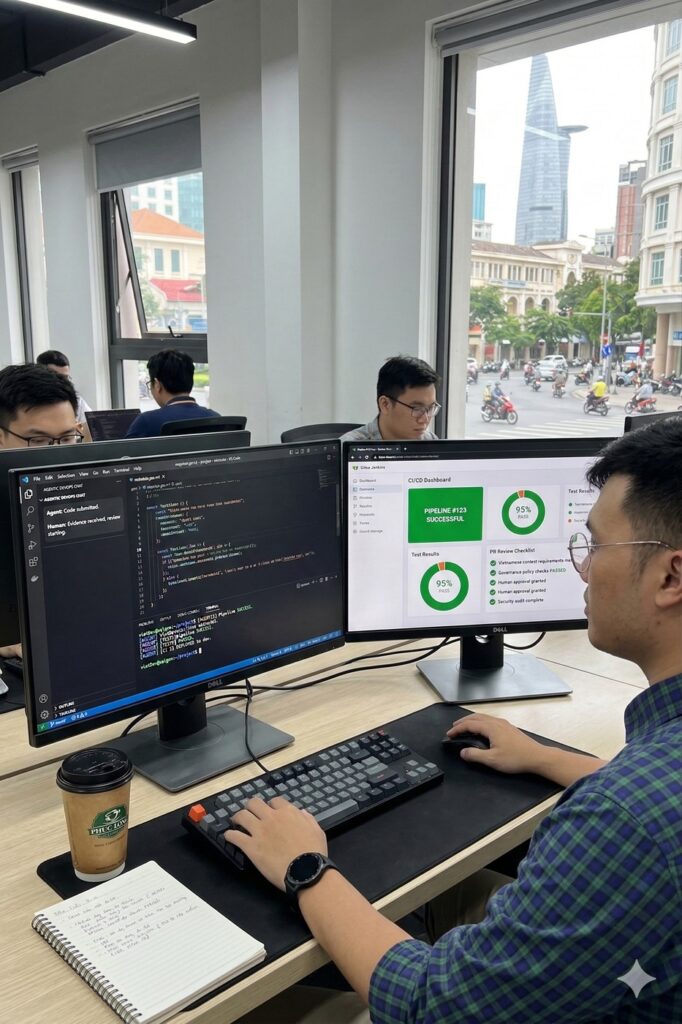

- In March 2025, human supervision was still heavy for UI polish and production-safe merges.

- By late 2025, multi-file edits + planning + tests became more reliable across serious tools.

- By early 2026, OpenClaw (formerly Clawdbot/Moltbot) pushed the “always-on personal agent” model outside IDE-only sessions.

- Biggest democratization force: no-code/vibe coding and agentic app builders that let non-engineers ship usable tools.

- Biggest risk: quality illusion. Teams moved faster, but governance, architecture discipline, and review rigor became the bottleneck.

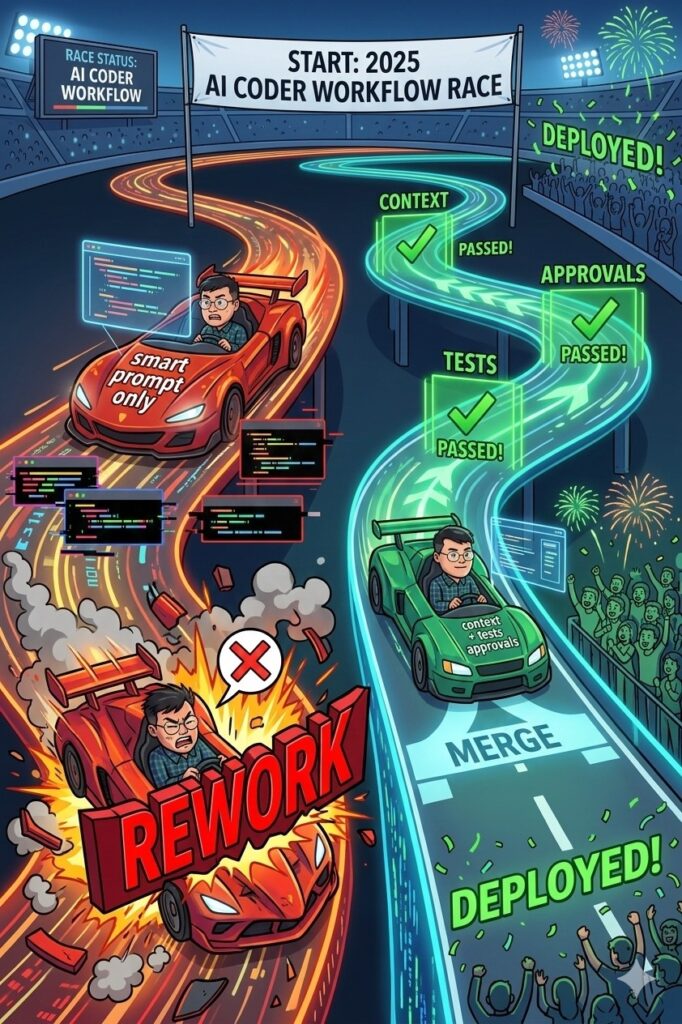

0) The Real Upgrade: Prompting to SDLC-Aware Planning

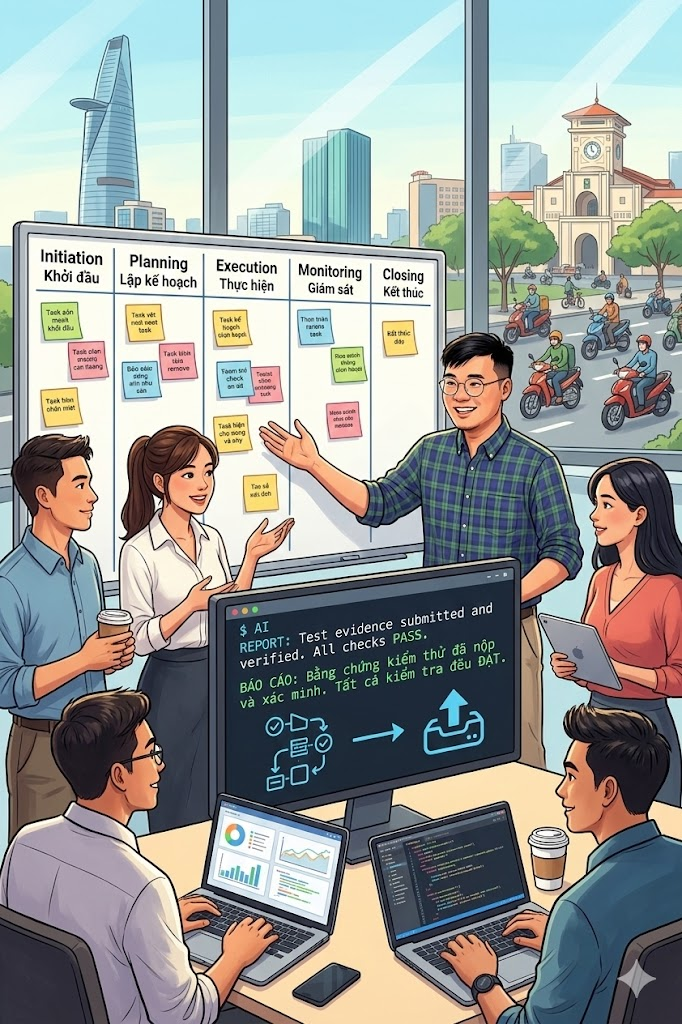

Let’s be honest: 2025 was not mainly a “better prompt” story. It was an SDLC-aware execution loop story.

What changed in real teams?

- Initiation: clarify business intent and constraints before touching code.

- Planning: define scope, boundaries, acceptance criteria, rollback logic.

- Execution: let agents implement scoped tasks.

- Monitoring: require evidence (tests, logs, screenshots, diffs).

- Closing & support: human review, merge discipline, maintenance loop.

This is why context engineering became serious. If you just say “build feature X,” you might get speed but random outputs. If you provide constraints + explicit validation gates, you get speed with much less chaos. Isn’t that exactly the tradeoff most teams were missing in 2024?

1) Chronological Timeline: February 2025 to Early 2026

1.1 Timeline Table

| Time | What Happened | Why It Mattered |

|---|---|---|

| Jan 2025 | OpenAI announced Operator research preview | Mainstreamed the expectation that assistants should take actions, not only answer [R2] |

| Jan 20, 2025 | DeepSeek released DeepSeek-R1 (deepseek-reasoner) and pushed DeepThink/Thinking Mode workflows in public docs | China-origin reasoning models became impossible to ignore in global coding workflows [R17][R18] |

| Jan 27, 2025 | U.S. AI market shock around DeepSeek narrative (AP reported sharp declines in Nvidia/Nasdaq/S&P 500 that day) | Marked a geopolitical + competitive wake-up call for U.S. AI incumbents [R19] |

| Feb 2, 2025 | OpenAI launched deep research in ChatGPT | Normalized asynchronous multi-step research/agent workflows [R3] |

| Feb 24, 2025 | Anthropic released Claude 3.7 Sonnet and previewed Claude Code | Major coding jump; terminal-native agent workflow became practical for many teams [R1] |

| Mar 2025 | Lovable and similar builders accelerated vibe coding + visual app generation | Non-developer shipping velocity increased meaningfully [R5] |

| Mar 2025 | Google released Gemini 2.5 Pro Experimental in AI Studio/Gemini surfaces | Raised coding+reasoning baseline with broad access [R4] |

| Apr 29, 2025 | Alibaba released Qwen3 with open models and hybrid thinking/non-thinking modes | Reinforced China’s open-model momentum for practical coding and agent tasks [R22] |

| Apr 17, 2025 | Reuters reported OpenAI in talks to acquire Windsurf for about $3B | Marked the start of visible M&A pressure around AI IDE distribution [R26] |

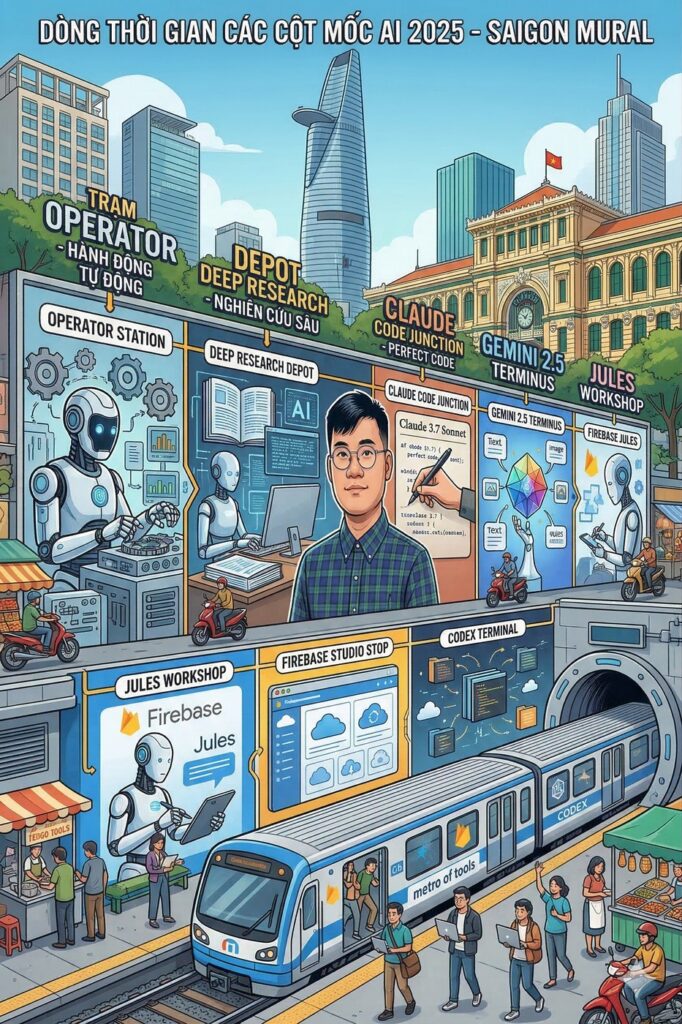

| Apr-May 2025 | Google expanded coding-agent surface with Jules and Firebase Studio pathways | Asynchronous cloud coding patterns became mainstream for broader audiences [R6][R7] |

| May 16, 2025 | OpenAI launched Codex research preview in ChatGPT | Made cloud task delegation for software work a mainstream workflow [R20] |

| Jun 3, 2025 | OpenAI published major Codex updates (including internet access and PR-oriented improvements) | Signaled rapid iteration toward practical software team usage [R21] |

| Jul 11, 2025 | Google hired Windsurf’s CEO and parts of its R&D team with a reported $2.4B licensing-style deal | Showed hyperscaler urgency to secure IDE talent and product distribution [R27] |

| July 2025 | Kiro by AWS entered public preview focus, explicitly pushing spec-driven development and agent workflows | Put structured requirements/design/tasks directly into the AI IDE mainstream [R15][R16] |

| Jul 14, 2025 | Cognition signed a definitive agreement to acquire Windsurf | Confirmed that AI IDE ownership had become a direct competitive moat [R28][R29] |

| Nov 18, 2025 | Google announced Gemini 3 for software developers and introduced Google Antigravity for autonomous workflows | Marked Google’s first-party autonomous workflow surface in the same IDE/runtime battlefield [R43][R44][R45] |

| H2 2025 | IDE ecosystem accelerated planning modes, model routing, MCP integrations | Context plumbing became a product differentiator [R8][R9] |

| H2 2025 | China open-source model ecosystem broadened (e.g., DeepSeek open releases, GLM-4.5 open model family) | Increased model optionality and pricing/quality pressure worldwide [R23][R24] |

| Late 2025 | Repo instruction files and context-engineering workflows became standard | Prompt cleverness alone stopped being enough [R10] |

| Late 2025 – Q1 2026 | OpenClaw popularized chat-surface + local runtime + model orchestration | Attention shifted from “AI inside IDE” to “AI operating layer across tools” [R11] |

| Early 2026 | Multimodal + browser + terminal + memory + connectors started converging | Role evolved from “AI tool user” to “AI workflow designer” |

1.2 Plain-English Timeline Block

- Q1 2025: the market learns that actions > answers.

- Q2 2025: multi-tool orchestration becomes daily practice.

- H2 2025: teams learn the painful part: quality control, not generation speed, is the true bottleneck.

- Q1 2026: continuous agents begin to feel normal.

1.3 Three Moments That Changed the Slope

- DeepSeek R1 + Thinking Mode moment (January 2025): this was the clearest China-origin shock to U.S.-centric AI narratives in coding/reasoning workflows [R17][R18][R19].

- Codex cloud-delegation moment (May-June 2025): OpenAI made asynchronous software-task delegation mainstream for many teams [R20][R21].

- Kiro-by-AWS spec-driven moment (July 2025): specs/workflows moved from docs practice into first-class IDE behavior [R15][R16].

- IDE distribution-war moment (April-July 2025): acquisition talks, acqui-hire licensing deals, and ownership changes around VS Code-fork IDEs became headline strategy [R26][R27][R28][R29][R30][R31].

- Antigravity platform moment (November 2025): Google launched a first-party autonomous workflow surface and tied it directly to Gemini 3 developer positioning [R43][R44][R45].

Google officially introduced Antigravity in its Gemini 3 developer announcement on November 18, 2025, and the public codelab describes autonomous workflow building directly on that surface [R43][R44][R45].

2) March 2025 Reality Check: You Were Right

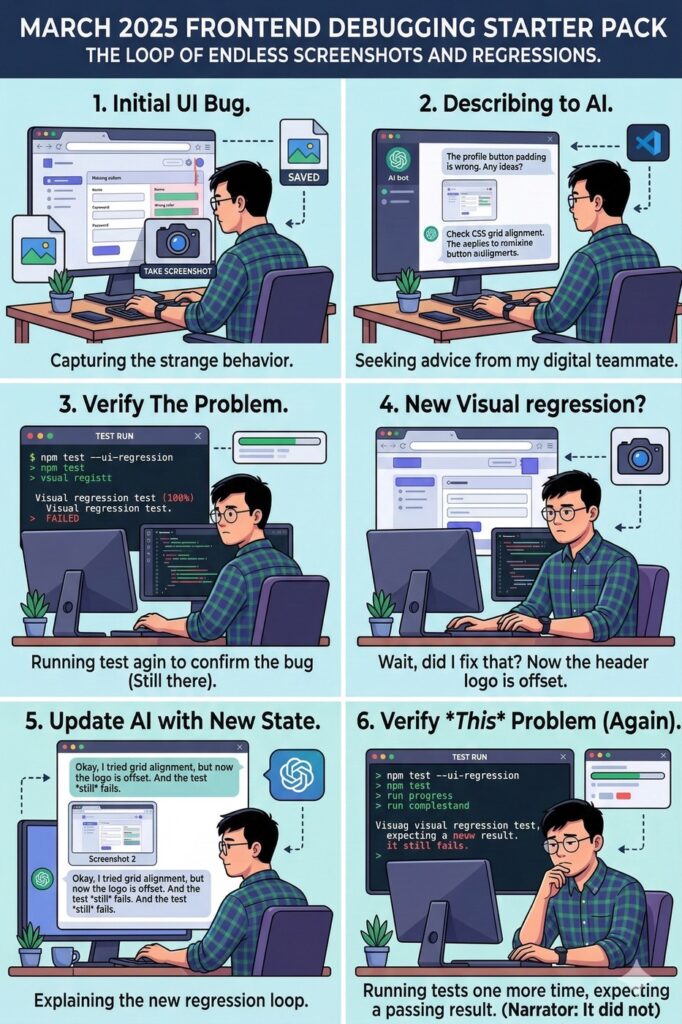

March 2025 was exciting, but messy. Boilerplate and prototype speed were great. Deep architecture decisions? Still shaky. Frontend polish? Often screenshot-loop hell. Production confidence? Limited.

This was the daily grind: capture UI -> paste screenshot -> ask model to fix -> rerun -> repeat. Did it work? Sometimes. Was it stable? Not consistently.

2.1 Failure Pattern Ledger (Evidence-Safe, No Synthetic Cost Columns)

| Failure Pattern | Observable Symptom | Why It Happened | Evidence Signal You Can Capture | Fix That Reduced Pain |

|---|---|---|---|---|

| Screenshot-loop UI fixes | Same UI bug returns after each patch | Weak visual grounding, CSS side effects | Sequence of screenshot diffs + repetitive review comments | Visual regression checks + stricter UI constraints |

| Multi-file drift | One fix breaks logic in adjacent modules | Missing architectural boundaries in prompts | Cross-file diff conflicts + repeated rollback commits | Task decomposition + file-level acceptance criteria |

| Test-pass but behavior mismatch | Unit tests green but user flow still wrong | Incomplete scenario coverage | Failing E2E/manual acceptance evidence despite passing unit suite | Behavior-focused test cases + scenario checklists |

| Over-generated code bloat | Feature works but diff is bloated and hard to review | Agent optimized for completion, not maintainability | High churn in PR diff + reviewer requests for simplification | Max-diff limits + explicit refactor review gates |

| Governance rework before merge | Security/compliance objections appear late | Missing permission/risk model and HITL gates | Late-stage security comments or blocked approvals | Pre-execution policy checks + explicit approval modes |

This is the quality illusion problem in one table: high output volume can look like high progress, but rework can erase the gain. How many teams learned this the expensive way in 2025?

3) Major Players and Their 2025 Trajectories

The market did not produce one winner. It produced multiple strengths by workflow layer.

3.1 Comparison Table by 2025 Trajectory

| Player | Early 2025 Identity | Mid/Late 2025 Shift | Practical Strength | Common Weakness |

|---|---|---|---|---|

| Anthropic (Claude + Claude Code) | Strong coding jump + terminal agent preview | Better multi-step coding loops | Refactoring, codebase reasoning, tool discipline | Can be conservative without explicit constraints |

| OpenAI (reasoning + deep research + Codex path) | Reasoning/tool-use convergence | Async cloud+terminal workflows matured | Breadth of workflow patterns | Cost/latency + oversight still matter |

| Google (Gemini 2.5 + AI Studio, Jules, Firebase Studio) | Coding benchmark momentum + easy access | Strong web/frontend iteration and cloud workflows | Accessibility + multimodal prototyping | Quotas/rate limits + governance complexity |

| AWS Kiro | Spec-driven AI IDE + CLI framing | Pushed requirement-to-implementation loops into editor/runtime defaults | Strong structure for requirements/design/tasks workflow | Still requires disciplined team adoption to avoid process theater |

| China Open-Source Stack (DeepSeek, Qwen, GLM) | High-velocity open releases + reasoning emphasis | Hybrid thinking/tool-use and broader open-weight accessibility | Price-performance pressure + model optionality | Rapid release pace can complicate evaluation/governance |

| GitHub Copilot ecosystem | Enterprise footprint and IDE presence | More agentic layers + instruction-file alignment | Native GitHub integration | Behavior varies by model/context quality |

| Cursor/Windsurf IDE layer | AI-first daily editor workflows | Planning/previews/MCP evolution | Day-to-day coding velocity | Needs strong team conventions to avoid messy outputs |

| Lovable (vibe + no-code hybrid) | Non-technical builder acceleration | Stronger connectors + agentic app flow | Fast internal app/prototype shipping | Architectural brittleness without guardrails |

| JetBrains AI + Junie | Deep IDE workflow integration | More agentic planning/writing/testing in IDE | Language tooling + enterprise controls | Experience varies by model provider and setup |

| Cline + Aider + terminal-first agents | Open and portable workflows | Provider portability + repo-focused loops | Power-user productivity in real repos | Steeper operator skill requirement |

3.2 Who Felt Impact First?

- Individual developers and indie hackers.

- Startup product teams.

- Non-technical operators building internal tools.

That third wave was the quiet revolution. If marketing and operations people can ship working tools, what happens to the old boundary between “technical” and “non-technical” roles?

4) IDE Layer Expansion Was the Real Battlefield

Model vendors got headlines. IDE/runtime layers decided daily lived experience.

Cursor and Windsurf pushed orchestration habits. JetBrains moved deeper into agentic flows. Terminal-first stacks like Cline/Aider stayed powerful for operators who wanted direct control. In many teams, the question stopped being “Which model is best?” and became “Which operating loop is safest and fastest for this repo?”

4.1 IDE Race War (What Actually Happened)

This is where your point is dead-on. Mid-2025 was not just feature competition, it was distribution competition:

- OpenAI was reported in talks to buy Windsurf (~$3B) [R26].

- That deal did not close; Google then hired Windsurf’s CEO and key R&D talent with a reported $2.4B licensing-style move [R27].

- Cognition then signed and announced a definitive agreement to acquire Windsurf [R28][R29].

That sequence is basically the IDE race in one paragraph. Who controls the coding surface controls user workflow, model routing defaults, and enterprise adoption velocity.

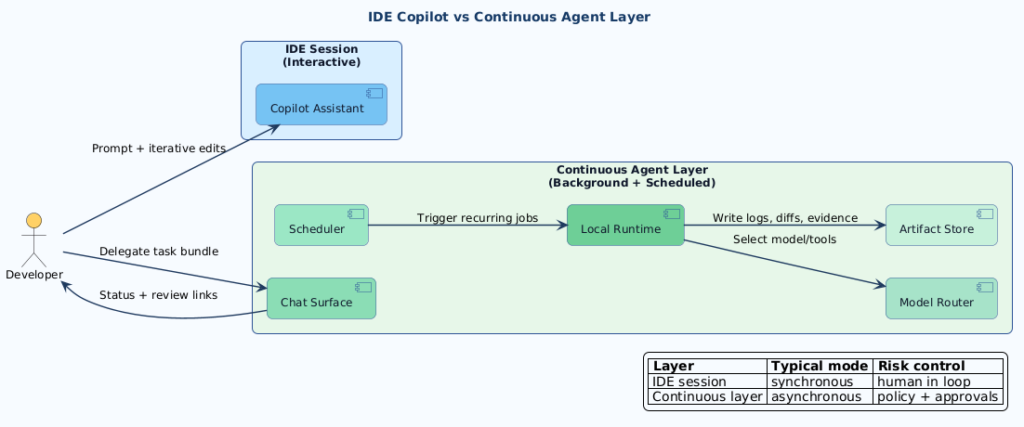

PlantUML Diagram:

4.2 Why VS Code Forks Became Strategic Assets

Why were Cursor, Kiro and Windsurf in the middle of this? Because both are explicitly tied to VS Code fork economics and ecosystem constraints.

- Cursor states it is built from a fork of the VS Code codebase [R30].

- Windsurf publicly explains why it diverged from vanilla VS Code and documents constraints around extension marketplace compatibility [R31][R32].

- AWS tried its own Fork with Kiro in hope to be on the train.

- Microsoft’s own VS Code FAQ explains marketplace usage limits for non-Microsoft products [R33].

So yes, this really was an IDE war, not just a model benchmark war.

4.3 About “OpenAI Bought It All”

Before the Windsurf saga, OpenAI had already done multiple strategic acquisitions in 2024, including Rockset (officially announced) and Multi (widely reported) [R34][R35]. Not IDE acquisitions, but still part of the same build-out logic: control more of the real developer workflow stack.

On 14 Feb, 2026, Peter Steinberger, Founder of OpenClaw joined OpenAi with his note as follow:

tl;dr: I’m joining OpenAI to work on bringing agents to everyone. OpenClaw will move to a foundation and stay open and independent.

4.4 Anthropic’s Path Was Different

Anthropic pushed hard through Claude Code plus IDE integrations (for example VS Code and JetBrains) rather than headline IDE acquisition moves [R1][R36]. Different route, same battlefield: win the daily developer surface.

4.5 Google Antigravity Was a Direct Platform Counter-Move

Antigravity should sit inside the IDE war story, not outside it. Google announced Antigravity in the Gemini 3 developer launch and described it as a way to turn ideas into autonomous workflows with prompt playground + deployment flow [R43][R44].

Why this matters: after the Windsurf talent/licensing move, Antigravity gave Google a first-party control surface for agent workflow defaults instead of relying only on third-party IDE dynamics [R27][R45].

5) OpenClaw (ex-Clawdbot/Moltbot): The Hybrid Inflection

Classic IDE copilot pattern:

- waits inside editor session,

- responds per prompt,

- primarily session-bound.

OpenClaw-style pattern:

- available across messaging/chat surfaces,

- orchestrates external models,

- keeps local state and history,

- supports ongoing/scheduled workflows.

Why does this matter? Because it reframes AI coding from a session helper to a continuous operations layer. Scheduled maintenance, recurring triage, background research, and long-running execution loops become first-class.

PlantUML Diagram:

6) Capability Evolution Through 2025

| Capability | Q1 2025 | Q2 2025 | H2 2025 |

|---|---|---|---|

| Code generation quality | Good for standard patterns | Better reasoning-backed edits | More consistent in larger repos |

| Multi-file edits | Possible but fragile | Improved with planning flows | Common and more reliable |

| Terminal/test tool use | Early and inconsistent | Wider support in major tools | Became expected default |

| Frontend/UI handling | Often screenshot loops | Better previews + visual context | Stronger multimodal/browser support |

| Context handling | Bigger windows but lossy | Better retrieval/caching techniques | More mature context engineering practices |

| Non-tech usability | Prototype-friendly | Expanded rapidly via builders | Legitimate entry path into app shipping |

| Governance controls | Light in casual usage | Growing policy/permission features | Better enterprise controls, still uneven |

This maturity curve lines up with public release cadence across Claude Code, Codex, Kiro, Gemini, and DeepSeek reasoning/tool-use documentation [R1][R4][R15][R18][R20][R21].

7) Pros and Cons by Timeline Phase

| Phase | Pros | Cons |

|---|---|---|

| Feb-Mar 2025 | Fast prototypes, fast learning, quick demo conversion | Fragile production reliability, heavy QA burden, frontend mismatch loops |

| Apr-Jun 2025 | Better multi-step reasoning, stronger terminal/browser loops | Requirement ambiguity still caused drift; latency/cost pressure remained |

| H2 2025 | Better quality through specs/context engineering | Requirement quality and governance became mandatory bottlenecks |

The governance part is not hypothetical; vendor security bulletins and safer-by-default execution controls became far more visible during this phase [R16][R25].

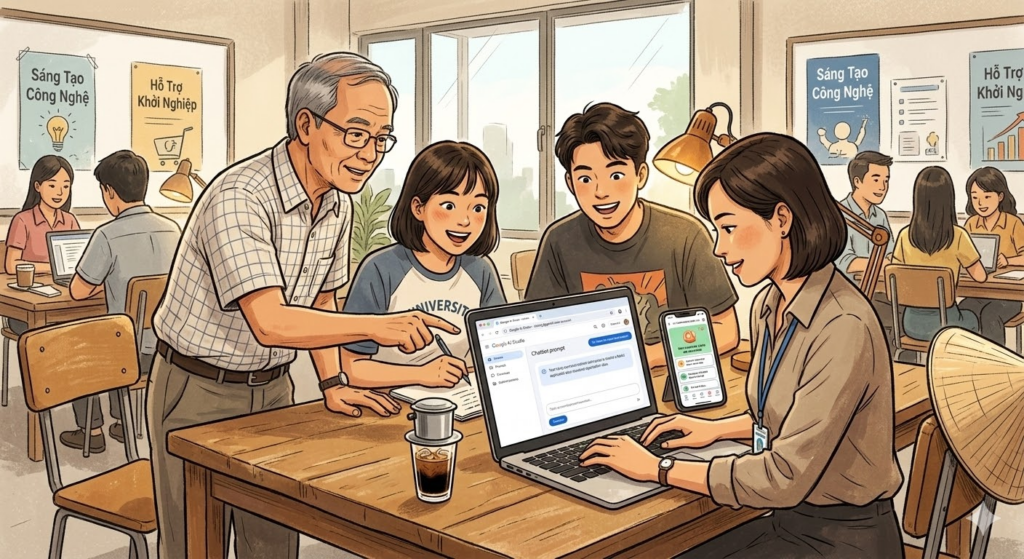

8) Why Non-Technical Builders Could Suddenly Ship Apps

If we are being honest, this section has one dominant protagonist: Google AI Studio.

Yes, many no-code and vibe tools mattered. But the biggest unlock for non-technical users was that AI Studio removed setup friction almost completely:

- Google positioned AI Studio as a free, web-based tool you can sign into with a Google account.

- At launch, it emphasized generous free quota and direct handoff to code/IDE workflows.

- It was framed for broad global developer access, not niche enterprise onboarding [R46].

Then the second unlock: account readiness. The API key setup flow became increasingly simple, including default project/key behavior for new users, so a non-technical person could move from prompt testing to app calls faster than before [R47].

And your education point is valid. Workspace accounts were a major distribution channel:

- Google documents that Workspace users have AI Studio access by default (admin-controlled).

- For Workspace for Education, there are explicit age/access guardrails, but the overall surface is still broad for students/faculty groups [R48].

I saw this firsthand in Vietnam contexts too: random professors, students, and ops people with existing Google accounts could test app ideas quickly without needing deep cloud setup. That was a real phenomenon.

8.1 The Throttle Moment (When the Mood Changed)

The “everyone can build” phase did not disappear, but it tightened.

| Date | Signal | Why It Mattered |

|---|---|---|

| 2024-2025 | AI Studio positioned as fast, free in-browser prototyping plus Gemini API access | Created a low-friction global entry path for non-technical builders [R46][R52] |

| 2025 onward | API key onboarding and AI Studio quickstart made app-building workflows easier to operationalize | Shifted many users from playground-only use into programmatic usage [R47][R51] |

| 2025-2026 | Official pricing and tiering docs emphasized free-vs-paid behavior and quota boundaries | Set clearer economics for scaling workloads [R50] |

| March 3, 2026 | Official rate-limits documentation clarified tiered quotas and stricter behavior for preview models | Confirms why users perceived a practical throttling shift at scale [R49] |

So yes, your interpretation tracks what many people felt: early wave = open experimentation, later wave = tighter tiered usage with clearer API-key and paid-tier gravity for sustained workloads [R49][R50][R51].

But let’s still keep the strategic truth clear: shipping an MVP got easier than sustaining it. The winning operating pattern became:

- Non-technical teams validate value quickly.

- Engineering hardens architecture, security, observability, and scale.

If a non-technical team can now produce v1 in days, does engineering become less important? No. Engineering becomes more strategic, because it owns reliability and consequence management [R10][R16][R25].

9) Context Engineering vs Prompt Engineering: The 2025 Upgrade

If 2023-2024 was prompt engineering, 2025 was context engineering.

Prompt engineering asks:

- How do I phrase this request?

Context engineering asks:

- What repository rules must the agent obey?

- What architecture constraints are non-negotiable?

- What tests define done?

- What cannot change under any circumstance?

- What evidence must be produced before merge?

That is why repo instruction files, spec-driven workflows, and policy-aware execution became default patterns. A clever prompt can improve a response. A strong context architecture improves a system [R10][R15][R16].

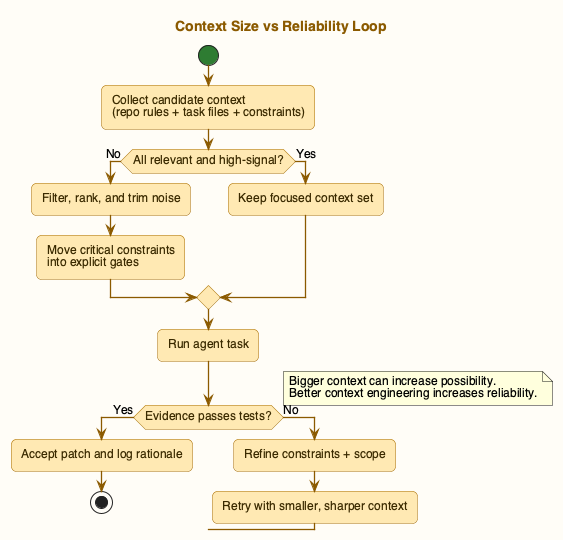

10) Context Window Limits: Why “More Context” Is Not Always Better

Large windows helped. But larger context was never a free reliability upgrade.

10.1 Failure Patterns with Big Context

| Failure Pattern | What Happens | Real Impact |

|---|---|---|

| Retrieval dilution | Critical details drown in noise | Missed constraints and wrong edits |

| Multi-needle confusion | One fact is easy; many precise facts are hard | Cross-file inconsistency |

| Conflicting instructions | Old docs/specs fight new requirements | Agent follows outdated guidance |

| Latency inflation | Bigger prompts slow every loop | Human-in-the-loop rhythm degrades |

| Cost amplification | Long prompts repeated across retries | Teams validate less often, risking quality |

Google’s own long-context guidance notes that single-needle retrieval can be strong while many-needle reliability varies [R12]. That aligns with what coding teams reported all year.

10.2 Practical Rules That Actually Worked

- Keep context deliberate, not maximal.

- Place highest-priority constraints in explicit, high-signal sections.

- Split monolith tasks into verifiable sub-tasks.

- Cache stable repo context when platform supports it.

- Require evidence artifacts: changed files, tests, known risks, rollback notes.

If you remember one line from this section, keep this: bigger context increases possibility; better context engineering increases reliability.

PlantUML Diagram:

11) Future Verdict (2026 and Beyond)

11.1 What Will Almost Certainly Happen

- Serious software workflows become human + agents, not human vs agents.

- Center of gravity continues moving toward hybrid runtime orchestration: IDE agents + background agents + scheduled automations.

- High-value skill shifts toward designing reliable execution loops.

- Velocity gains continue only where teams invest in review, testing, and governance.

11.2 What Is Still Uncertain

- Standardization: too many competing patterns and protocols.

- Evaluation quality: benchmark wins still do not guarantee production reliability.

- Safety under autonomy: prompt injection, over-permissioned tools, and accountability boundaries remain unresolved at scale.

- Talent adaptation: hiring and education systems are still catching up.

11.3 Competitive Prediction: Most Likely Market Shape

- Winners are full stacks, not single models: planning, permissions, scheduling, memory hygiene, review evidence, rollback controls.

- Hybrid systems in the OpenClaw direction become default for power users and small teams because compounding automation is hard to ignore.

- Pure “chat in IDE” remains massive, but becomes baseline rather than moat.

- Strategic moat shifts to trust, governance, and safe unattended actions.

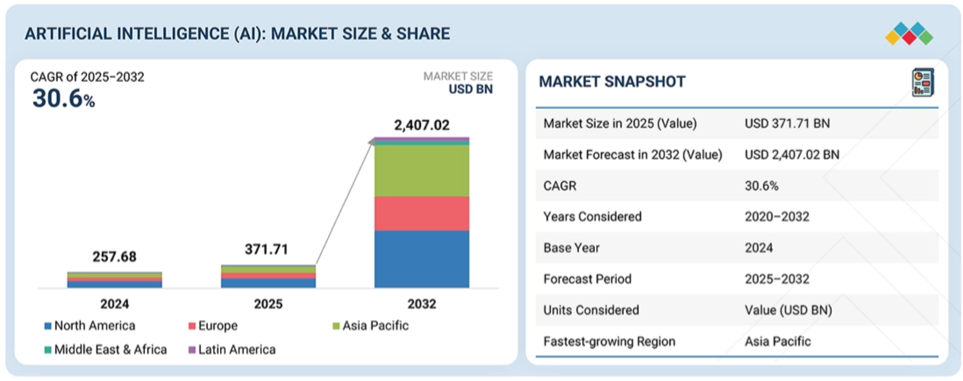

11.4 Market-Demand Reality Check (2025-2032)

You asked to anchor this verdict with market projections, so here is the clean version.

MarketsandMarkets projects the global AI market from USD 371.71B (2025) to USD 2,407.02B (2032) at 30.6% CAGR [R37][R38][R42]. If that curve even lands close, AI is not a feature wave anymore, it is infrastructure-level demand pressure on compute, tooling, data pipelines, governance, and talent.

My take: the headline number is useful as a direction signal, not a guarantee. Forecasts are scenario models. Still, a 30%+ CAGR model implies one thing very clearly: teams that treat AI as side tooling will get outpaced by teams that treat AI as an operating system for work.

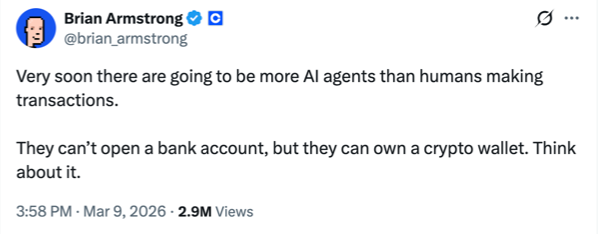

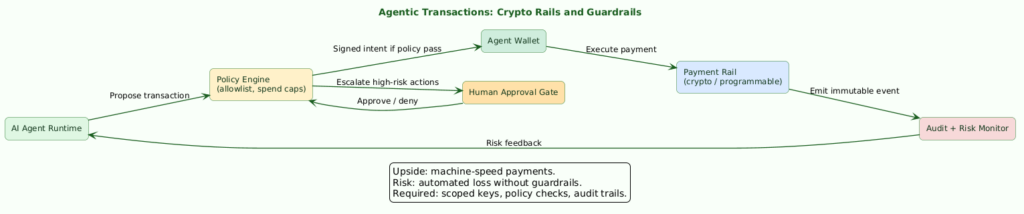

11.5 Agentic Transactions, Crypto Rails, and the Threat Model

In the X post from March 9, 2026, Coinbase CEO Brian Armstrong argues that AI agents may soon outnumber humans in transaction activity and notes that agents can own crypto wallets even if they cannot open bank accounts yet [R41].

That idea is not random hype. Coinbase’s own agent stack is now explicitly built around agent wallets, programmable spending limits, x402 payments, and guardrails such as key isolation and transaction controls [R39][R40].

So why does crypto look like a natural rail for agents?

- API-native money movement (machine-speed, programmable rules).

- Wallet-level permissioning that can be scoped per session/task.

- Easier machine-to-machine payment flows than traditional bank-account workflows.

But there is a real threat model here too, and this is where people get too optimistic too fast:

| Risk | What It Looks Like in Practice | Mitigation Pattern |

|---|---|---|

| Transaction swarm abuse | Large bot swarms create noisy, low-value, or manipulative transaction floods | Rate limits, spend caps, and anomaly detection per agent identity |

| Prompt-injection to payment action | Agent tricked into paying malicious endpoints | Payment allowlists, policy engines, and staged approvals |

| Wallet-key or permission leakage | Unauthorized spend from over-broad agent permissions | Key isolation, scoped privileges, fast revoke paths |

| Compliance blind spots | High-speed machine payments bypass normal review controls | KYT/KYC layering, auditable ledgers, and risk-tiered policies |

PlantUML Diagram:

In my opinion, crypto can be an ideal execution rail for agent transactions. But only with hard guardrails. Otherwise, “autonomy” becomes “automated loss.”

11.6 Practical Conclusion

The future is not “AI writes everything.”

The future is:

- humans define intent and constraints,

- agents execute and iterate (interactive + background),

- humans review, govern, and own consequences.

In other words: fewer pure coders, more software orchestrators.

And honestly? That is exciting. A little scary, but exciting.

12) What I Would Do Differently (If Restarting in 2025)

If I could rewind to February 2025, I would make three changes earlier:

- I would formalize repo instructions sooner, before agent usage scales.

- I would enforce evidence-based merge criteria from day one for any projects.

- I would separate prototype mode and production mode explicitly, so teams stop confusing speed with readiness.

That single distinction, prototype vs production, would have saved so much rework. Seriously, how many times did we all “ship” something that was actually just a good demo?

FAQ

Q1: Is prompt engineering dead now?

A: No. Prompt quality still matters. But in production, prompting without context architecture is fragile. Prompting is tactical. Context engineering is systemic.

Q2: Did one vendor clearly win 2025?

A: Not really. Different stacks won different layers: model quality, IDE UX, terminal workflows, orchestration, enterprise governance. The game became compositional.

Q3: Why did non-engineers suddenly build apps faster?

A: Because agentic builders reduced the barrier to entry for UI, CRUD flows, connectors, and deployment. But sustaining reliable systems still requires strong engineering fundamentals.

Q4: Does huge context window solve reliability?

A: Not by itself. Bigger context can increase noise, cost, and latency. Deliberate context curation plus validation loops is what improved outcomes.

Q5: Is OpenClaw-like continuous agent architecture always better?

A: Not always. It is powerful for recurring operations, but only with clear guardrails: permission boundaries, approval gates, risk-tiered actions, and auditable artifacts.

Q6: If AI keeps moving from chat helper -> coder -> researcher -> planner -> autonomous agent, are we heading to a fully automated life where humans finally get more time for family, health, and meaning?

A: This is the biggest question, honestly. The optimistic path is real: AI handles more repetitive cognitive labor, and people reclaim time for relationships, creativity, and deeper life goals. But the default outcome is not guaranteed. Without policy, redistribution, education redesign, and strong human governance, automation can concentrate power instead of freeing people. So my current take is: yes, AI can create more human freedom, but only if we design for that outcome on purpose, not by accident.

Discussion Hooks

- In your team, what broke first in 2025: requirements quality, test discipline, or governance?

- Have you seen non-technical builders create production-worthy tools, or mostly fast prototypes? and forget about it after Google limit on Ai usage?

- Which workflow feels safer for you today: IDE-only copilots or hybrid continuous agent systems?

- If you had to optimize one thing in 2026, would you pick model quality, context architecture, or review automation?

Mong được nghe góp ý của bạn!

TL;DR Recap

- 2025 was the year coding moved from prompt-response to agentic execution loops.

- March 2025 felt revolutionary but operationally messy.

- Context engineering, not prompt cleverness, became the reliable multiplier.

- Non-technical builders gained shipping power, while engineering responsibility shifted up-stack to reliability/governance.

- Market pressure is accelerating: one widely cited 2025-2032 forecast models AI at USD 371.71B to USD 2,407.02B (30.6% CAGR) [R37][R38].

- Agentic commerce is moving from theory to tooling: agent wallets and programmable payment rails are now concrete, with both upside and new risk surfaces [R39][R40][R41].

- 2026 direction is clear: human intent + agent execution + human accountability.

References

- [R1] Anthropic. (2025, February 24). Claude 3.7 Sonnet and Claude Code. https://www.anthropic.com/news/claude-3-7-sonnet

- [R2] TechCrunch. (2025, January 23). OpenAI launches Operator, an AI agent that performs tasks autonomously. https://techcrunch.com/2025/01/23/openai-launches-operator-an-ai-agent-that-performs-tasks-autonomously/

- [R3] TechCrunch. (2025, February 25). OpenAI rolls out Deep Research to paying ChatGPT users. https://techcrunch.com/2025/02/25/openai-rolls-out-deep-research-to-paying-chatgpt-users/

- [R4] Google DeepMind. (2025, March). Gemini 2.5: Our newest Gemini model with thinking. https://blog.google/technology/google-deepmind/gemini-model-thinking-updates-march-2025/

- [R5] Lovable. (2025). Lovable changelog. https://docs.lovable.dev/changelog

- [R6] Google. (2025). Jules. https://jules.google/

- [R7] Firebase. (2025). Firebase Studio documentation. https://firebase.google.com/docs/studio

- [R8] Cursor. (2025). Cursor changelog. https://www.cursor.com/changelog

- [R9] Windsurf. (2025). Windsurf editor changelog. https://windsurf.com/changelog

- [R10] GitHub Changelog. (2025). Copilot instruction/context workflow updates. https://github.blog/changelog/

- [R11] OpenClaw community + ecosystem references (2025-2026). OpenClaw overview and project history. https://en.wikipedia.org/wiki/OpenClaw

- [R12] Google AI for Developers. (2025). Long context guidance and practices. https://ai.google.dev/gemini-api/docs/long-context

- [R13] Local operations analysis (this repository). (2026). 50 days with OpenClaw.

/Users/pmlecuong/Documents/FrankleeGitHub/franklee/Documents/YoutubeEducationVideos/22Feb26-50-days-with-OpenClaw-The-hype-the-reality-what-ac/README.md - [R14] Local ecosystem context (this repository). (2025-2026). Kiro and Lovable changelogs and notes. Internal repository artifacts.

- [R15] Kiro. (2025). Kiro home page. https://kiro.dev/

- [R16] Kiro. (2025). About Kiro. https://kiro.dev/about

- [R17] DeepSeek. (2025, January 20). DeepSeek-R1 release. https://api-docs.deepseek.com/news/news250120

- [R18] DeepSeek. (2025). Reasoning model (

deepseek-reasoner) and thinking mode guidance. https://api-docs.deepseek.com/guides/reasoning_model - [R19] Associated Press. (2025, January 27). A frenzy over an artificial intelligence chatbot made by Chinese tech startup DeepSeek upended stock markets Monday. https://apnews.com/article/52c54e361616509280bd2775674b6b4b

- [R20] InfoQ. (2025, May). OpenAI Introduces Codex: A Cloud-Based Software Engineering Agent. https://www.infoq.com/news/2025/05/openai-codex/

- [R21] OpenAI Developers. (2025). Codex quickstart. https://developers.openai.com/codex/quickstart

- [R22] Qwen Team. (2025, April 29). Qwen3: Think deeper, act faster. https://qwenlm.github.io/blog/qwen3/

- [R23] DeepSeek. (2025, March 25). DeepSeek-V3-0324 release and open-source model update. https://api-docs.deepseek.com/news/news250325

- [R24] Z.ai / THUDM. (2025). GLM-4.5 open-source model repository. https://github.com/zai-org/GLM-4.5

- [R25] AWS Security Bulletin. (2025, July 14). AWS-2025-019: Kiro AI IDE human-in-the-loop control bypass. https://aws.amazon.com/security/security-bulletins/AWS-2025-019/

- [R26] CNBC TV18. (2025, April 17). OpenAI in talks to buy AI coding assistant Windsurf for about $3 billion, Bloomberg News reports. https://www.cnbctv18.com/technology/openai-in-talks-to-buy-ai-coding-assistant-windsurf-for-about-3-billion-bloomberg-news-reports-19592445.htm

- [R27] CNBC TV18. (2025, July 11). Google hires Windsurf CEO, some R&D employees in $2.4 billion deal, WSJ reports. https://www.cnbctv18.com/technology/google-hires-windsurf-ceo-some-rd-employees-in-2-4-billion-deal-wsj-reports-19685978.htm

- [R28] InfoWorld. (2025, July 14). Cognition agrees to buy what’s left of Windsurf. https://www.infoworld.com/article/4023030/cognition-agrees-to-buy-whats-left-of-windsurf.html

- [R29] Cognition. (2025, July 14). Cognition x Windsurf. https://cognition.ai/blog/windsurf

- [R30] Cursor. (2025). Security FAQ (states Cursor is built from a fork of VS Code codebase). https://cursor.com/security

- [R31] Windsurf. (2025). How is Windsurf different from VS Code? https://windsurf.com/faq/how-is-windsurf-different-from-vs-code

- [R32] Windsurf Docs. (2025). Windsurf docs (extension marketplace and compatibility notes). https://docs.windsurf.com/windsurf/cascade/memories

- [R33] Microsoft. (2025). VS Code FAQ: can I use extensions from the marketplace in VS Code OSS? https://code.visualstudio.com/docs/supporting/faq#_can-i-use-extensions-from-the-marketplace-in-vscode-oss

- [R34] TechCrunch. (2024, June 21). OpenAI buys Rockset to bolster its enterprise AI. https://techcrunch.com/2024/06/21/openai-buys-rockset-to-bolster-its-enterprise-ai/

- [R35] TechCrunch. (2024, June 24). OpenAI buys a remote collaboration platform. https://techcrunch.com/2024/06/24/openai-buys-a-remote-collaboration-platform/

- [R36] Anthropic Docs. (2025). Claude Code IDE integrations. https://docs.anthropic.com/en/docs/claude-code/ide-integrations

- [R37] MarketsandMarkets. (2025, July 22). Artificial Intelligence Market worth $2,407.02 billion by 2032. https://www.marketsandmarkets.com/PressReleases/artificial-intelligence.asp

- [R38] MarketsandMarkets. (2025). Artificial Intelligence Market – Global Forecast to 2032. https://www.marketsandmarkets.com/Market-Reports/artificial-intelligence-market-74851580.html

- [R39] Coinbase Developer Platform. (2026). Agentic Wallet. https://docs.cdp.coinbase.com/agentic-wallet/welcome

- [R40] Coinbase Developer Platform. (2026). AgentKit overview. https://docs.cdp.coinbase.com/agent-kit

- [R41] Brian Armstrong. (2026, March 9). X post about AI agents and crypto wallets (local image copy:

images/references/brian-armstrong-agent-population-2026.png). - [R42] MarketsandMarkets. (2026). AI market snapshot image (local image copy:

images/references/ai-market-share-prediction.png). - [R43] Google. (2025). Gemini 3. https://blog.google/products-and-platforms/products/gemini/gemini-3/

- [R44] Google. (2025). Gemini 3 for developers. https://blog.google/innovation-and-ai/technology/developers-tools/gemini-3-developers/

- [R45] Google. (2026). Google Antigravity official site. https://antigravity.google/

- [R46] Google. (2024). Gemini API and Google AI Studio: faster to build with free in-browser prototyping and broad regional access. https://blog.google/technology/ai/gemini-api-developers-cloud/

- [R47] Google AI for Developers. (2026). Set up your API key (default project/API key flow for new users). https://ai.google.dev/gemini-api/docs/api-key

- [R48] Google AI for Developers. (2026). Gemini API and AI Studio available regions (Workspace and Workspace for Education access notes). https://ai.google.dev/gemini-api/docs/available-regions

- [R49] Google AI for Developers. (2026, March 3). Rate limits (preview-model throttling and paid-tier scaling). https://ai.google.dev/gemini-api/docs/rate-limits

- [R50] Google AI for Developers. (2026). Gemini API pricing. https://ai.google.dev/gemini-api/docs/pricing

- [R51] Google AI for Developers. (2026). AI Studio quickstart. https://ai.google.dev/gemini-api/docs/ai-studio-quickstart

- [R52] Google AI Studio. (2026). Google AI Studio web app. https://aistudio.google.com/

Related reading

Read the relevant post here:

- Intent First, Prompts Second: A Practical Model for AI Projects

- Context Engineering vs Vibe Coding: A Business Person's Technical Discovery (Part 1 of 2)

- My First Vibe Coding Journey: From Zero to Working App in 4 Hours (And What I Learned About Spec-Driven Development, SDD)

Image Disclosure

Some images used in this post were created with AI. They may appear realistic, but they do not depict real scenes or real photographs unless explicitly stated otherwise. When a realistic image of me is an actual photograph, the caption will clearly note that it is a real image.

Timeline helps. ppl forget fast lol.

Pingback: Intent First, Prompts Second: A Practical Model for AI Projects - PMLeCuong

Pingback: Agents Are Just Tools in a Loop—and That’s Why They Work - PMLeCuong

时间线整理一下就清楚多了。