I still remember the first time I told an AI coder to “just build it.” It was a tarot + fortune‑telling app, a playful idea for the weekend. The UI came out surprisingly decent and fast, the Gemini API responded, and I deployed it to my home server like a proud kid showing a science fair project. Then… nothing. No intent. No follow‑up. No plan. It just sat there like a poster on the wall that I forgot to look at again. Personal experience, not a grand theory, but it hurts because I can see the pattern now.

And it wasn’t just one app. Over the next few weeks, I built maybe 5–10 tiny demo apps with different AI coding tools. Some from Google AI Studio, some from Gemini, some from Codex, some from Kiro of AWS and random agent workflows. Fun? Absolutely. Useful? Mostly… no. I learned a lot about frameworks, deployment, debugging, architecture, and the weird psychology of letting an Ai model type for me. But without intent, I was basically collecting souvenirs. This is a personal journey, not a research study, so take it as lived experience, not as universal truth.

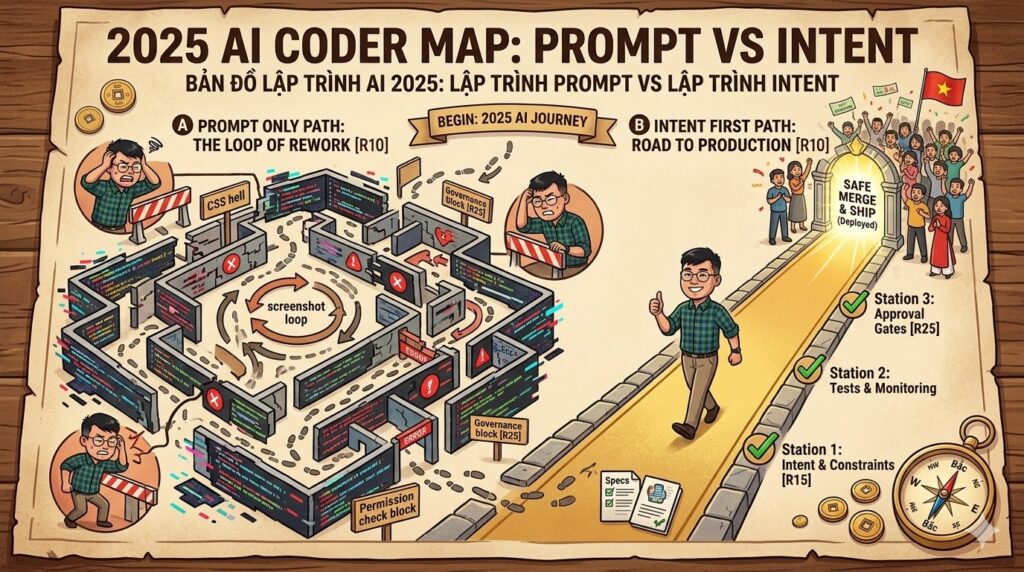

This post is about that shift. Prompt engineering is still a real skill, still relevant. But strategic intent and systems thinking are the layer above prompting—the layer that decides what the prompts are even for. And for solo builders (one‑person companies), that layer is not optional. It’s your insurance policy. It’s your sanity. It’s what keeps your AI projects from turning into a graveyard of “cool demos.” [R1][R2][R3][R4][R5][R6]

TÓM TẮT NHANH (TL;DR)

In my experience, 2024–2025 felt like the prompt engineering era. But prompts are execution. Strategic intent and systems thinking are the pre‑prompt architecture that makes AI outputs matter. I learned this the hard way by building many AI demo apps that had zero future life. Now I start with intent, map the system, define constraints, and only then write prompts. It’s especially critical for solo builders because there’s no team buffer. Intent first, prompts second is the shift that turned my AI experiments from toys into potential products. [R1][R2][R3][R4][R5][R6]

Here is the list of repo I build over the year of 2025.

Why Prompt Engineering Became a Thing (And Why That Wasn’t Wrong)

Let’s be real, the hype was real. Prompt engineering got attention because it delivered immediate results, and a growing body of research tried to make it systematic rather than mystical. A major 2024 survey catalogs techniques and applications across the field, which shows how quickly prompt engineering matured as a research topic. [R4]

The job market data is more nuanced. A 2025 analysis of LinkedIn postings found that prompt‑engineer roles are still relatively rare, but when they do appear, they demand a specific mix of skills: AI knowledge (22.8%), prompt design (18.7%), communication (21.9%), and creative problem‑solving (15.8%). That tells me the role is emerging, but it’s not just about clever prompts. It’s about systems, communication, and intent. [R5]

So here’s the honest lesson: prompt engineering is a skill inside a project, not the project itself. If you don’t define the project, the prompts will do whatever you asked, but not necessarily what you needed. The real question becomes: what comes before the prompt? [R1][R2][R3]

Systems Thinking + Strategic Intent: The Layer Above Prompting

Systems thinking is the habit of seeing the whole system—actors, feedback loops, constraints, and how changes ripple over time. That’s why it shows up in design and complex problem solving as a core principle. [R1][R17][R18]

Strategic intent is the aspirational direction of a system, the “why are we moving” and “where are we actually trying to go.” The Association for Project Management defines it as the overarching purpose or intended direction needed to reach a vision. That definition matters because it puts intent before execution. [R2]

And yes, this idea has history. Hamel and Prahalad framed strategic intent as a long‑term ambition that aligns effort and focus, not just a tactical plan. That’s the missing layer in most AI prompt workflows today. [R3]

So in plain words: prompts are the tactics, intent is the strategy, and systems thinking is the map. If you only do prompts, you’re walking fast in a fog. If you do intent and systems thinking, you’re walking slower but you’re actually moving in a direction that matters. [R1][R2][R3]

PlantUML Diagram

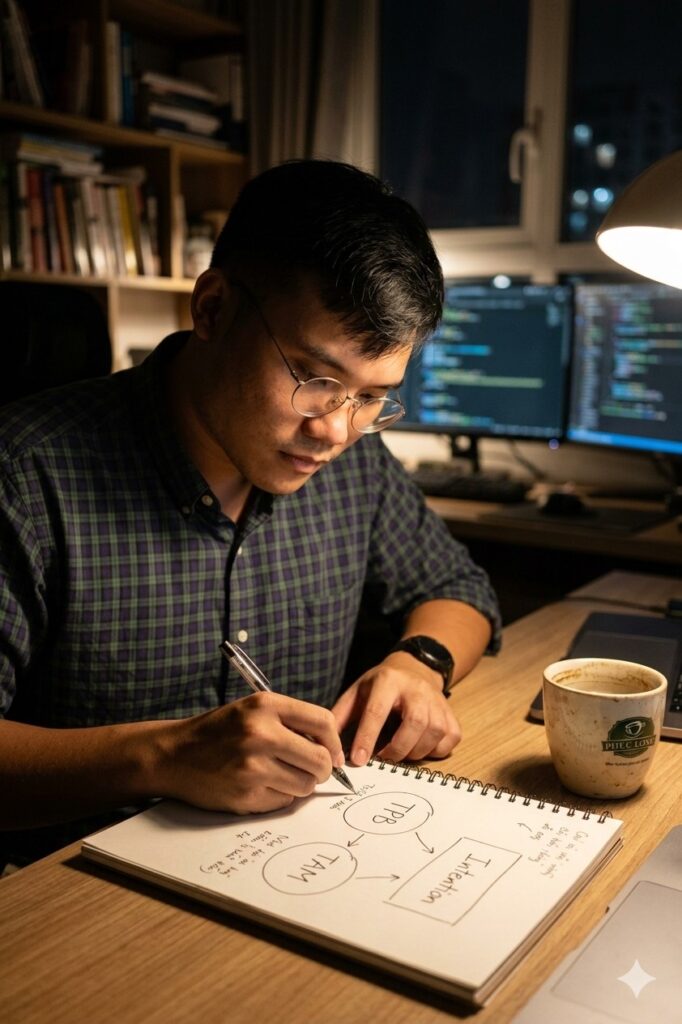

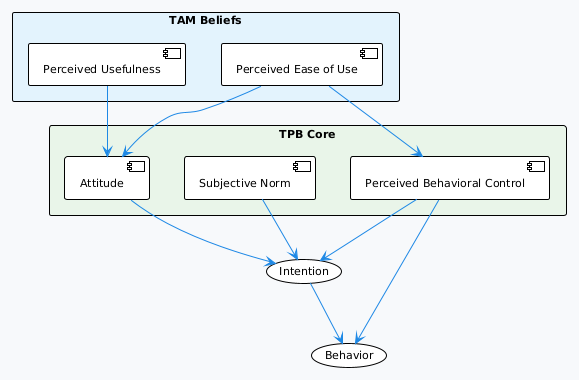

TPB + TAM: Intent Formation Meets Tech Beliefs

Alright, here’s the academic angle I want to add in this post for the sake of having it strong and solid on the statement of Intent first and Prompt second. The cleanest way I’ve found to explain how intent forms and why people adopt tech is to pair TPB with TAM. TPB explains how intention is built. TAM explains why someone believes the tech is worth using. Put together, it gives us a practical lens for why “intent first” actually works. [R9][R10][R11][R12][R14][R15][R16]

Theory of Planned Behavior (TPB) says intention is shaped by three forces: attitude, subjective norm, and perceived behavioral control. So if a solo builder doesn’t feel confident, doesn’t see social support, or doesn’t believe the outcome matters, intention collapses even if the prompts are good. [R9][R11][R12][R13]

Technology Acceptance Model (TAM) says perceived usefulness and perceived ease of use shape attitude and intention to use the technology. In plain words: if AI feels helpful and not too hard, people adopt it. If not, they bounce. That’s why prompt engineering alone can’t rescue a system that feels useless or painful. [R10][R14][R15]

So the combo goes like this: TAM feeds TPB. Perceived usefulness and ease of use help build attitude. That attitude, combined with social pressure and control, forms intention. That intention drives behavior. This is why I say “intent first, prompts second.” Intent is not just a strategy choice, it is a behavioral requirement. [R9][R10][R11][R12][R13]

Quick Mapping (TPB + TAM in one view)

- Perceived usefulness → stronger attitude (TAM → TPB). [R10][R14][R15]

- Perceived ease of use → stronger attitude + higher control. [R10][R14][R15]

- Attitude + subjective norm + perceived behavioral control → intention. [R9][R11][R12][R13][R16]

- Intention + control → actual usage and follow‑through. [R9][R11][R12]

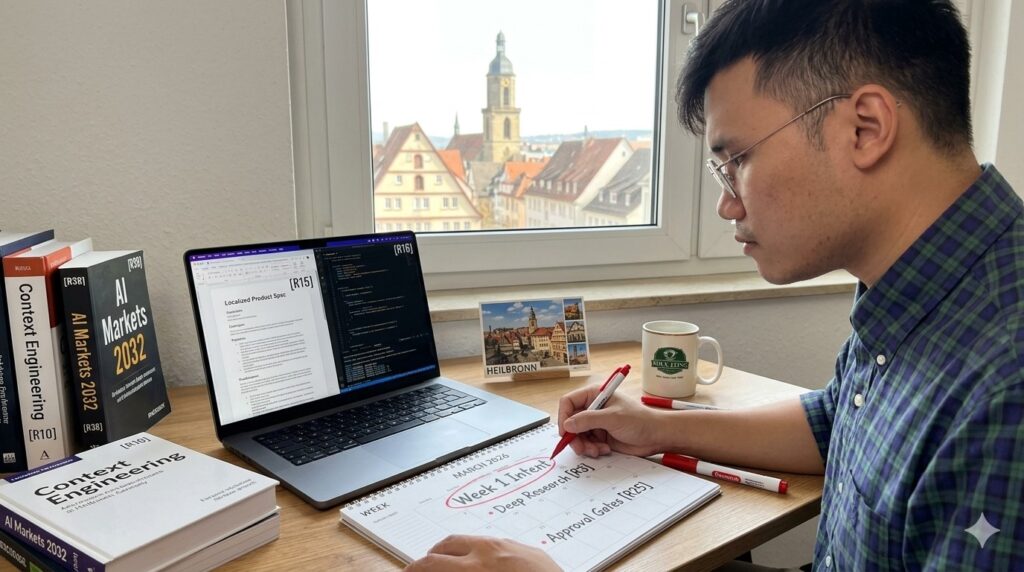

Image Prompt: A Vietnamese solo builder drawing two circles labeled “TPB” and “TAM” on a notebook, arrows flowing into “Intention,” coffee cup beside, cozy night desk light, realistic documentary style.

PlantUML Diagram

The Tarot App That Taught Me the Lesson

This is personal experience, not a universal template. But I need to tell it because it’s the clearest example in my head.

I built a tarot/fortune‑telling web app as my very first AI‑coding experiment. It was fun. The AI built the UI fast, integrated Gemini, and I deployed it. I felt proud. I felt “wow, I can ship now.” But I had no plan: no target user, no feedback loop, no maintenance idea, no post‑launch goal. So the app died the same month it was born. It was a fun experiment, not a product.

That failure was not because the prompt was weak. It was because I never defined the intent. What was the app for? Who would it help? What would success even look like? I never answered those. I just “built it” because I could. Personal experience, not a research claim. Hey, I was a newbie anyway who just happened to have access to a powerful tool. I didn’t know what I didn’t know.

If you want the longer story, I wrote about my first vibe coding journey and how it shifted my product thinking. It’s a different angle, but the same lesson. [R7]

From 5–10 Demo Apps to One Simple Realization

After the tarot app, I built more demos. Different stacks, different AI tools, different frameworks. I learned deployment, architecture, and how to debug with an agent. That learning was real. It’s the only reason I can talk about this now. Again, this is personal experience, not a general claim.

But I also saw the pattern: without intent, the apps stop after the demo. They don’t become products. They don’t survive the next month. They don’t create a loop where users, feedback, and improvement actually happen. That’s why I started to think in terms of systems and lifecycle, not just prompts. [R1][R2]

This is also why I now see AI agents as “tools in a loop.” The loop is not just model → output. It’s goal → plan → tool use → feedback → adjustment. That loop only works when the goal and intent are clear. [R8]

Solo Builder Reality: Why One‑Person Companies Need Intent More Than Teams

If you’re a solo builder (one‑man company), you don’t have the safety net of a team. There’s no architect to save you. There’s no QA team to catch the holes. There’s no product partner to challenge the assumptions. So intent isn’t just “nice.” It’s your defensive wall.

Also, the data shows the solo/one‑person business world is huge and growing. The U.S. Census Bureau reports that nonemployer establishments grew faster than employer businesses nearly every year from 2012 to 2023, and nonemployers made up a larger share of all U.S. businesses across that period. That’s the macro trend behind the solo builder wave. [R6]

That matters because the constraints of a solo builder are different. Time is your scarcest resource. Your energy is your bottleneck. Your feedback loop is slow. Intent is how you prevent your limited energy from dissolving into ten random demos. [R2][R6]

Question for you: If you only have 10 hours this weekend, do you want 10 hours of “cool prototypes” or 10 hours of “intent‑driven progress”? Which one will you remember after three months? Which one might still be alive after one year?

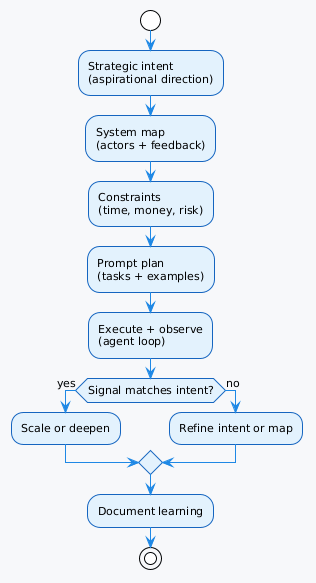

The Intent‑to‑Prompt Model (My Practical Version)

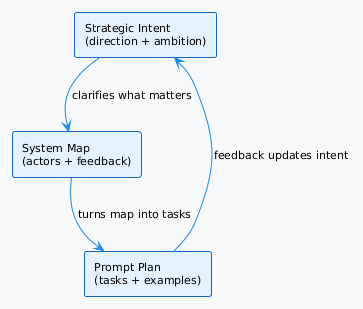

This is my current working model. It’s not academic, it’s just what saved me from endless demo loops. I call it Intent‑to‑Prompt. Five steps. One loop. It looks simple, but the power is in the discipline. [R1][R2]

Step 1: Intent (Aspirational direction)

What is the long‑term direction? What is the outcome that matters? This is the “why do we exist” layer. Strategic intent is a real management concept, not a TikTok trend. [R2][R3]

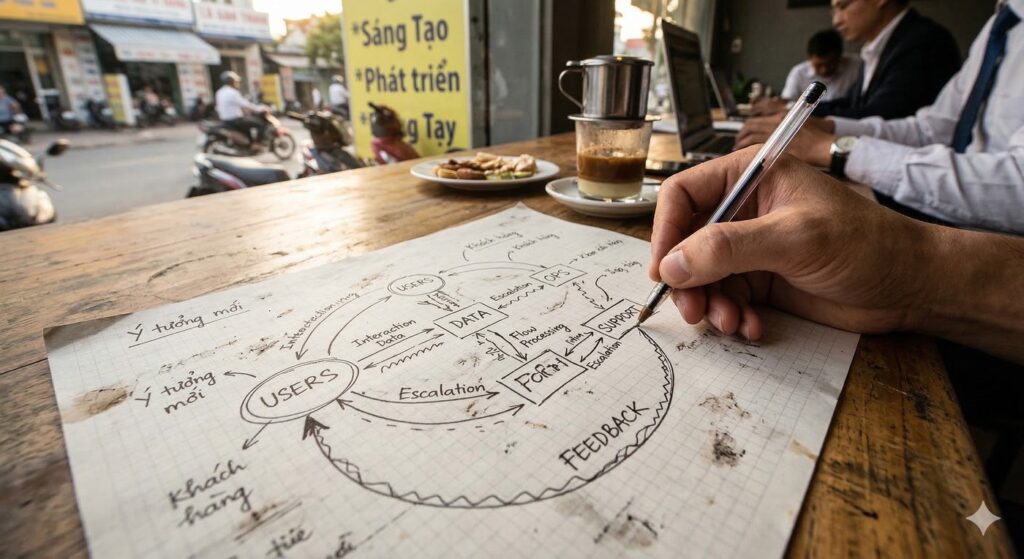

Step 2: System Map (Actors, constraints, feedback)

Who are the actors? What systems do they touch? What feedback loops exist? Systems thinking means mapping those relationships before you jump into execution. [R1]

Step 3: Constraints (Time, money, risk, ethics)

What limits actually matter? What is “out of scope”? What risks will break you? This is the part most prompt engineers skip because it feels “slow,” but it’s what keeps you from rework. [R1][R2]

Step 4: Prompt Plan (Now you write prompts)

Only after the first three steps do you start designing prompts. Now prompts become tactical instructions inside a system, not random magic spells. [R4][R5]

Step 5: Feedback Loop (Run, learn, adjust)

Ship fast, but only inside the intent. Use feedback to update the map. This is how systems evolve instead of collapsing. [R1][R8]

PlantUML Diagram

A Mini Dataset (Theory‑Only) to Compare Outcomes

Okay, let’s be honest. I don’t have a randomized controlled study. This is a theoretical comparison based on systems thinking and strategic intent literature, plus my lived experience. It is not empirical data. Use it as a mental model, not as proof. [R1][R2][R3]

| Scenario (Theory) | With Intent Framing | Prompt‑Only Approach | Likely Result | Why It Happens | Reference Anchor |

|---|---|---|---|---|---|

| New AI app idea | Clear target user + purpose | Just build features | Faster demo, weaker product | No strategic direction | [R2][R3] |

| Tool selection | Stack chosen for long‑term fit | Stack chosen for speed | Higher stability | System constraints mapped | [R1] |

| Feature scope | 3–5 core flows | 12 nice‑to‑have features | Lower rework | Constraints defined | [R1][R2] |

| Post‑launch plan | Maintenance + feedback loop | No maintenance plan | Higher survival | Feedback loop planned | [R1][R8] |

| Solo builder focus | One KPI + one audience | Everything for everyone | Less burnout | Intent reduces noise | [R2][R6] |

What I would do differently (looking back): I would stop after the first demo app, freeze the tool‑hopping, and do a one‑page intent statement: target user, measurable outcome, maintenance plan, and one month of feedback loops. That would have saved me at least 3–4 weeks of random experimenting. Personal experience, not a universal claim.

Timeline Block: A Simple 4‑Week Intent‑First Sprint (Solo Builder)

This is how I’d run a small AI product experiment now. It’s short, it’s structured, and it keeps the intent alive. [R1][R2]

| Week | Focus | Concrete Output | Risk Check |

|---|---|---|---|

| 1 | Intent + system map | 1‑page intent doc + system map | “Do I know the user?” |

| 2 | Prompt planning | Prompt library + constraints list | “Are prompts tied to intent?” |

| 3 | Build + test | Working prototype + user feedback | “Are users reacting as expected?” |

| 4 | Iterate + decide | Keep, pivot, or stop | “Does this deserve maintenance?” |

Notice the logic: the build phase is short, but the intent phase is explicit. That’s the difference.

Failure‑Cost Ledger (Realistic, Not Theoretical)

These are realistic failure modes I’ve seen in my projects and in other teams. The costs are rough estimates based on personal experience, not formal accounting. Personal observation, not a research claim.

| Failure Event | Immediate Impact | Cost Impact | Time Impact | Root Cause |

|---|---|---|---|---|

| Build a “cool” AI demo with no users | App abandoned | ~6–12 hours wasted | +2 weeks momentum loss | No intent statement |

| Feature explosion from AI suggestions | Scope bloat | ~2–3x dev time | +3–4 weeks | No constraints |

| Launch without maintenance plan | Bugs stay open | User trust drops | Ongoing | No post‑launch intent |

| One‑person team tries to serve everyone | Focus collapse | Burnout | 1–2 months | No priority filter |

| Prompting without system map | Misaligned outputs | Rework | +1–2 weeks | Missing feedback loop |

This is why I say: prompt engineering is real, but intent is the cost control. [R1][R2]

“But Isn’t This Just Management Theory?”

I get the skepticism. You might be thinking: “Do I really need strategic intent for a small app? Isn’t this overkill?” Fair question.

Here’s my honest take: if you’re experimenting for fun, you can skip intent. If you’re testing a technical skill, skip it. But if you want any real outcome beyond a demo, you need some form of intent or you’ll drift. Systems thinking and strategic intent are not bureaucracy; they’re clarity tools. [R1][R2][R3]

Also, most of us are solo builders now. There’s no team to counterbalance your blind spots. Intent is how you create a mental team: it forces you to ask the questions a Product Manager, Business Analyst, and Product Owner would normally ask. [R2][R6]

So the real question is: what kind of project are you building? A weekend toy? Or something with a life cycle? If it’s the second, the intent layer pays for itself quickly.

Before You Write Prompts, Ask These 10 Questions

These are the questions I now ask myself before I let an AI agent code. You’ll notice they are intent‑first, not prompt‑first.

- Who is the real user, not the imagined user?

- What is the single outcome I care about?

- What would make this project not worth doing?

- What constraints will kill this project if ignored?

- What is the smallest system map that still feels honest?

- What happens after launch? Who maintains it?

- What data or feedback would change my mind quickly?

- What are the top 3 risks I can’t ignore?

- What does “good enough” look like in 30 days?

- If this fails, what will I still learn?

If you can answer these, your prompts will be sharper. If you can’t, the prompt output might still be pretty, but your project won’t survive. [R1][R2]

FAQ (Real Questions I Think Relevant to Most of Us)

Q1: Is prompt engineering dead?

No. It’s still valuable, and research shows it is a fast‑growing, actively studied practice. But it’s only one layer of the stack. It does not replace intent. [R4]

Q2: Do I need systems thinking for a small solo builder project?

If the project is just for fun, you don’t. If you want it to survive after launch, yes, at least a light version. [R1]

Q3: Strategic intent sounds too corporate. Can I keep it simple?

Yes. Strategic intent can be a one‑page note. It’s the direction, not a 50‑page deck. [R2]

Q4: What if I don’t know the intent yet?

Then your first task isn’t “write prompts.” Your first task is “explore and discover intent.” That could be interviews, market research, or even a personal reflection session. [R1][R2]

Q5: Does this apply to AI agents too?

Yes. Agents are just tools in a loop. Without intent, the loop just spins. [R8]

Discussion

- Have you ever built an AI demo that you never touched again?

- What is the smallest “intent statement” you’ve ever written that still worked?

- Do you think solo builders need more planning or more speed?

- If prompt engineering was the 2024 keyword, what’s your 2026 keyword?

- Should “intent” be taught in AI coding bootcamps?

I’m curious. I’m still learning. I can be wrong. So please share your story.

References

[R1] Interaction Design Foundation. (n.d.). What is systems thinking? https://ixdf.org/literature/topics/systems-thinking

[R2] Association for Project Management. (n.d.). What is strategic intent? https://www.apm.org.uk/resources/what-is-project-management/what-is-strategic-intent/

[R3] Hamel, G., & Prahalad, C. K. (1989). Strategic intent. Harvard Business Review, 67(3), 63–76. https://hbr.org/1989/05/strategic-intent-2

[R4] Sahoo, P., Singh, A. K., Saha, S., Jain, V., Mondal, S., & Chadha, A. (2024). A systematic survey of prompt engineering in large language models: Techniques and applications. arXiv. https://arxiv.org/abs/2402.07927

[R5] Vu, A., & Oppenlaender, J. (2025). Prompt engineer: Analyzing hard and soft skill requirements in the AI job market. arXiv. https://arxiv.org/abs/2506.00058

[R6] Shoemaker, T. (2025, July 30). The steady rise of the nonemployer business. U.S. Census Bureau. https://www.census.gov/library/stories/2025/07/nonemployer-business-growth.html

[R7] Le Cuong. (2025, September 7). My first vibe coding journey: From zero to working app in 4 hours (And what I learned about spec-driven development, SDD). https://pmlecuong.com/my-first-vibe-coding-journey-from-zero-to-working-app-in-4-hours-and-what-i-learned-about-product-driven-development/

[R8] Le Cuong. (2025, September 23). Agents are just tools in a loop—and that’s why they work. https://pmlecuong.com/agents-are-just-tools-in-a-loop-and-that-s-why-they-work/

[R9] Ajzen, I. (1991). The theory of planned behavior. Organizational Behavior and Human Decision Processes, 50(2), 179–211. https://people.umass.edu/aizen/pubs/tpb.1991.pdf

[R10] Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319–340. https://www.jstor.org/stable/249008

[R11] Armitage, C. J., & Conner, M. (2001). Efficacy of the theory of planned behaviour: A meta-analytic review. British Journal of Social Psychology, 40(4), 471–499. https://pubmed.ncbi.nlm.nih.gov/11795063/

[R12] Sheeran, P. (2002). Intention–behavior relations: A conceptual and empirical review. European Review of Social Psychology, 12(1), 1–36. https://www.tandfonline.com/doi/abs/10.1080/14792772143000003

[R13] Ajzen, I. (2002). Perceived behavioral control, self-efficacy, locus of control, and the theory of planned behavior. Journal of Applied Social Psychology, 32(4), 665–683. https://people.umass.edu/aizen/pubs/pbc.pdf

[R14] Venkatesh, V., & Davis, F. D. (2000). A theoretical extension of the technology acceptance model: Four longitudinal field studies. Management Science, 46(2), 186–204. https://ideas.repec.org/r/inm/ormnsc/v46y2000i2p186-204.html

[R15] Venkatesh, V., & Bala, H. (2008). Technology acceptance model 3 and a research agenda on interventions. Decision Sciences, 39(2), 273–315. https://onlinelibrary.wiley.com/doi/10.1111/j.1540-5915.2008.00192.x

[R16] Fishbein, M., & Ajzen, I. (1975). Belief, attitude, intention, and behavior: An introduction to theory and research. Addison-Wesley. https://people.umass.edu/aizen/f%26a1975.html

[R17] Meadows, D. H. (2008). Thinking in systems: A primer. Chelsea Green Publishing. https://www.chelseagreen.com/product/thinking-in-systems/

[R18] Sterman, J. D. (2000). Business dynamics: Systems thinking and modeling for a complex world. McGraw-Hill/Irwin. https://www.mheducation.com/highered/product/Business-Dynamics-Sterman.html

Related reading

Read the relevant post here:

- AI Coder Timeline 2025-Early2026: From Prompting to Agentic Software Teams

- Context Engineering vs Vibe Coding: A Business Person's Technical Discovery (Part 1 of 2)

- Agents Are Just Tools in a Loop—and That’s Why They Work

Image Disclosure

Some images used in this post were created with AI. They may appear realistic, but they do not depict real scenes or real photographs unless explicitly stated otherwise. When a realistic image of me is an actual photograph, the caption will clearly note that it is a real image.

Pingback: Agents Are Just Tools in a Loop—and That’s Why They Work - PMLeCuong

Pingback: Context Engineering vs Vibe Coding: The Project Memory Framework I Actually Needed (Part 2 of 2) - PMLeCuong

Short version: agreed 👍